1.03

Jun 9, 2017 - Same occurs also with codecs.open and when us. With open(path, 'wb') as f: f.write(u'hyv xe4'.encode('UTF-8')) try: with open(path) as f. In File 'c: Program Files (x86) IronPython 2.7 Lib encodings ascii.py', line.

This HOWTO discusses Python 2.x’s support for Unicode, and explainsvarious problems that people commonly encounter when trying to workwith Unicode. For the Python 3 version, see<https://docs.python.org/3/howto/unicode.html>.

Introduction to Unicode¶

History of Character Codes¶

In 1968, the American Standard Code for Information Interchange, better known byits acronym ASCII, was standardized. ASCII defined numeric codes for variouscharacters, with the numeric values running from 0 to127. For example, the lowercase letter ‘a’ is assigned 97 as its codevalue.

ASCII was an American-developed standard, so it only defined unaccentedcharacters. There was an ‘e’, but no ‘é’ or ‘Í’. This meant that languageswhich required accented characters couldn’t be faithfully represented in ASCII.(Actually the missing accents matter for English, too, which contains words suchas ‘naïve’ and ‘café’, and some publications have house styles which requirespellings such as ‘coöperate’.)

For a while people just wrote programs that didn’t display accents. I rememberlooking at Apple ][ BASIC programs, published in French-language publications inthe mid-1980s, that had lines like these:

Those messages should contain accents, and they just look wrong to someone whocan read French.

In the 1980s, almost all personal computers were 8-bit, meaning that bytes couldhold values ranging from 0 to 255. ASCII codes only went up to 127, so somemachines assigned values between 128 and 255 to accented characters. Differentmachines had different codes, however, which led to problems exchanging files.Eventually various commonly used sets of values for the 128–255 range emerged.Some were true standards, defined by the International Organization forStandardization, and some were de facto conventions that were invented by onecompany or another and managed to catch on.

255 characters aren’t very many. For example, you can’t fit both the accentedcharacters used in Western Europe and the Cyrillic alphabet used for Russianinto the 128–255 range because there are more than 128 such characters.

You could write files using different codes (all your Russian files in a codingsystem called KOI8, all your French files in a different coding system calledLatin1), but what if you wanted to write a French document that quotes someRussian text? In the 1980s people began to want to solve this problem, and theUnicode standardization effort began.

Unicode started out using 16-bit characters instead of 8-bit characters. 16bits means you have 2^16 = 65,536 distinct values available, making it possibleto represent many different characters from many different alphabets; an initialgoal was to have Unicode contain the alphabets for every single human language.It turns out that even 16 bits isn’t enough to meet that goal, and the modernUnicode specification uses a wider range of codes, 0–1,114,111 (0x10ffff inbase-16).

There’s a related ISO standard, ISO 10646. Unicode and ISO 10646 wereoriginally separate efforts, but the specifications were merged with the 1.1revision of Unicode.

(This discussion of Unicode’s history is highly simplified. I don’t think theaverage Python programmer needs to worry about the historical details; consultthe Unicode consortium site listed in the References for more information.)

Definitions¶

A character is the smallest possible component of a text. ‘A’, ‘B’, ‘C’,etc., are all different characters. So are ‘È’ and ‘Í’. Characters areabstractions, and vary depending on the language or context you’re talkingabout. For example, the symbol for ohms (Ω) is usually drawn much like thecapital letter omega (Ω) in the Greek alphabet (they may even be the same insome fonts), but these are two different characters that have differentmeanings.

The Unicode standard describes how characters are represented by codepoints. A code point is an integer value, usually denoted in base 16. In thestandard, a code point is written using the notation U+12ca to mean thecharacter with value 0x12ca (4810 decimal). The Unicode standard contains a lotof tables listing characters and their corresponding code points:

Strictly, these definitions imply that it’s meaningless to say ‘this ischaracter U+12ca’. U+12ca is a code point, which represents some particularcharacter; in this case, it represents the character ‘ETHIOPIC SYLLABLE WI’. Ininformal contexts, this distinction between code points and characters willsometimes be forgotten.

A character is represented on a screen or on paper by a set of graphicalelements that’s called a glyph. The glyph for an uppercase A, for example,is two diagonal strokes and a horizontal stroke, though the exact details willdepend on the font being used. Most Python code doesn’t need to worry aboutglyphs; figuring out the correct glyph to display is generally the job of a GUItoolkit or a terminal’s font renderer.

Encodings¶

To summarize the previous section: a Unicode string is a sequence of codepoints, which are numbers from 0 to 0x10ffff. This sequence needs to berepresented as a set of bytes (meaning, values from 0–255) in memory. The rulesfor translating a Unicode string into a sequence of bytes are called anencoding.

The first encoding you might think of is an array of 32-bit integers. In thisrepresentation, the string “Python” would look like this:

This representation is straightforward but using it presents a number ofproblems.

- It’s not portable; different processors order the bytes differently.

- It’s very wasteful of space. In most texts, the majority of the code pointsare less than 127, or less than 255, so a lot of space is occupied by zerobytes. The above string takes 24 bytes compared to the 6 bytes needed for anASCII representation. Increased RAM usage doesn’t matter too much (desktopcomputers have megabytes of RAM, and strings aren’t usually that large), butexpanding our usage of disk and network bandwidth by a factor of 4 isintolerable.

- It’s not compatible with existing C functions such as

strlen(), so a newfamily of wide string functions would need to be used. - Many Internet standards are defined in terms of textual data, and can’thandle content with embedded zero bytes.

Generally people don’t use this encoding, instead choosing otherencodings that are more efficient and convenient. UTF-8 is probablythe most commonly supported encoding; it will be discussed below.

Encodings don’t have to handle every possible Unicode character, and mostencodings don’t. For example, Python’s default encoding is the ‘ascii’encoding. The rules for converting a Unicode string into the ASCII encoding aresimple; for each code point:

- If the code point is < 128, each byte is the same as the value of the codepoint.

- If the code point is 128 or greater, the Unicode string can’t be representedin this encoding. (Python raises a

UnicodeEncodeErrorexception in thiscase.)

Latin-1, also known as ISO-8859-1, is a similar encoding. Unicode code points0–255 are identical to the Latin-1 values, so converting to this encoding simplyrequires converting code points to byte values; if a code point larger than 255is encountered, the string can’t be encoded into Latin-1.

Encodings don’t have to be simple one-to-one mappings like Latin-1. ConsiderIBM’s EBCDIC, which was used on IBM mainframes. Letter values weren’t in oneblock: ‘a’ through ‘i’ had values from 129 to 137, but ‘j’ through ‘r’ were 145through 153. If you wanted to use EBCDIC as an encoding, you’d probably usesome sort of lookup table to perform the conversion, but this is largely aninternal detail.

UTF-8 is one of the most commonly used encodings. UTF stands for “UnicodeTransformation Format”, and the ‘8’ means that 8-bit numbers are used in theencoding. (There’s also a UTF-16 encoding, but it’s less frequently used thanUTF-8.) UTF-8 uses the following rules:

- If the code point is <128, it’s represented by the corresponding byte value.

- If the code point is between 128 and 0x7ff, it’s turned into two byte valuesbetween 128 and 255.

- Code points >0x7ff are turned into three- or four-byte sequences, where eachbyte of the sequence is between 128 and 255.

UTF-8 has several convenient properties:

- It can handle any Unicode code point.

- A Unicode string is turned into a string of bytes containing no embedded zerobytes. This avoids byte-ordering issues, and means UTF-8 strings can beprocessed by C functions such as

strcpy()and sent through protocols thatcan’t handle zero bytes. - A string of ASCII text is also valid UTF-8 text.

- UTF-8 is fairly compact; the majority of code points are turned into twobytes, and values less than 128 occupy only a single byte.

- If bytes are corrupted or lost, it’s possible to determine the start of thenext UTF-8-encoded code point and resynchronize. It’s also unlikely thatrandom 8-bit data will look like valid UTF-8.

References¶

The Unicode Consortium site at <http://www.unicode.org> has character charts, aglossary, and PDF versions of the Unicode specification. Be prepared for somedifficult reading. <http://www.unicode.org/history/> is a chronology of theorigin and development of Unicode.

To help understand the standard, Jukka Korpela has written an introductory guideto reading the Unicode character tables, available at<https://www.cs.tut.fi/~jkorpela/unicode/guide.html>.

Another good introductory article was written by Joel Spolsky<http://www.joelonsoftware.com/articles/Unicode.html>.If this introduction didn’t make things clear to you, you should try reading thisalternate article before continuing.

Wikipedia entries are often helpful; see the entries for “character encoding”<http://en.wikipedia.org/wiki/Character_encoding> and UTF-8<http://en.wikipedia.org/wiki/UTF-8>, for example.

Python 2.x’s Unicode Support¶

Now that you’ve learned the rudiments of Unicode, we can look at Python’sUnicode features.

The Unicode Type¶

Unicode strings are expressed as instances of the

unicode type, one ofPython’s repertoire of built-in types. It derives from an abstract type calledbasestring, which is also an ancestor of the str type; you cantherefore check if a value is a string type with isinstance(value,basestring). Under the hood, Python represents Unicode strings as either 16-or 32-bit integers, depending on how the Python interpreter was compiled.The

unicode() constructor has the signature unicode(string[,encoding,errors]). All of its arguments should be 8-bit strings. The first argumentis converted to Unicode using the specified encoding; if you leave off theencoding argument, the ASCII encoding is used for the conversion, socharacters greater than 127 will be treated as errors:The

errors argument specifies the response when the input string can’t beconverted according to the encoding’s rules. Legal values for this argument are‘strict’ (raise a UnicodeDecodeError exception), ‘replace’ (add U+FFFD,‘REPLACEMENT CHARACTER’), or ‘ignore’ (just leave the character out of theUnicode result). The following examples show the differences:Encodings are specified as strings containing the encoding’s name. Python 2.7comes with roughly 100 different encodings; see the Python Library Reference atStandard Encodings for a list. Some encodingshave multiple names; for example, ‘latin-1’, ‘iso_8859_1’ and ‘8859’ are allsynonyms for the same encoding.

One-character Unicode strings can also be created with the

unichr()built-in function, which takes integers and returns a Unicode string of length 1that contains the corresponding code point. The reverse operation is thebuilt-in ord() function that takes a one-character Unicode string andreturns the code point value:Instances of the

unicode type have many of the same methods as the8-bit string type for operations such as searching and formatting:Note that the arguments to these methods can be Unicode strings or 8-bitstrings. 8-bit strings will be converted to Unicode before carrying out theoperation; Python’s default ASCII encoding will be used, so characters greaterthan 127 will cause an exception:

Much Python code that operates on strings will therefore work with Unicodestrings without requiring any changes to the code. (Input and output code needsmore updating for Unicode; more on this later.)

Another important method is

.encode([encoding],[errors='strict']), whichreturns an 8-bit string version of the Unicode string, encoded in the requestedencoding. The errors parameter is the same as the parameter of theunicode() constructor, with one additional possibility; as well as ‘strict’,‘ignore’, and ‘replace’, you can also pass ‘xmlcharrefreplace’ which uses XML’scharacter references. The following example shows the different results:Python’s 8-bit strings have a

.decode([encoding],[errors]) method thatinterprets the string using the given encoding:The low-level routines for registering and accessing the available encodings arefound in the

codecs module. However, the encoding and decoding functionsreturned by this module are usually more low-level than is comfortable, so I’mnot going to describe the codecs module here. If you need to implement acompletely new encoding, you’ll need to learn about the codecs moduleinterfaces, but implementing encodings is a specialized task that also won’t becovered here. Consult the Python documentation to learn more about this module.The most commonly used part of the

codecs module is thecodecs.open() function which will be discussed in the section on input andoutput.Unicode Literals in Python Source Code¶

In Python source code, Unicode literals are written as strings prefixed with the‘u’ or ‘U’ character:

u'abcdefghijk'. Specific code points can be writtenusing the u escape sequence, which is followed by four hex digits givingthe code point. The U escape sequence is similar, but expects 8 hexdigits, not 4.Unicode literals can also use the same escape sequences as 8-bit strings,including

x, but x only takes two hex digits so it can’t express anarbitrary code point. Octal escapes can go up to U+01ff, which is octal 777.Using escape sequences for code points greater than 127 is fine in small doses,but becomes an annoyance if you’re using many accented characters, as you wouldin a program with messages in French or some other accent-using language. Youcan also assemble strings using the

unichr() built-in function, but this iseven more tedious.Ideally, you’d want to be able to write literals in your language’s naturalencoding. You could then edit Python source code with your favorite editorwhich would display the accented characters naturally, and have the rightcharacters used at runtime.

Python supports writing Unicode literals in any encoding, but you have todeclare the encoding being used. This is done by including a special comment aseither the first or second line of the source file:

The syntax is inspired by Emacs’s notation for specifying variables local to afile. Emacs supports many different variables, but Python only supports‘coding’. The

-*- symbols indicate to Emacs that the comment is special;they have no significance to Python but are a convention. Python looks forcoding:name or coding=name in the comment.If you don’t include such a comment, the default encoding used will be ASCII.Versions of Python before 2.4 were Euro-centric and assumed Latin-1 as a defaultencoding for string literals; in Python 2.4, characters greater than 127 stillwork but result in a warning. For example, the following program has noencoding declaration:

When you run it with Python 2.4, it will output the following warning:

Python 2.5 and higher are stricter and will produce a syntax error:

Unicode Properties¶

The Unicode specification includes a database of information about code points.For each code point that’s defined, the information includes the character’sname, its category, the numeric value if applicable (Unicode has charactersrepresenting the Roman numerals and fractions such as one-third andfour-fifths). There are also properties related to the code point’s use inbidirectional text and other display-related properties.

The following program displays some information about several characters, andprints the numeric value of one particular character:

When run, this prints:

The category codes are abbreviations describing the nature of the character.These are grouped into categories such as “Letter”, “Number”, “Punctuation”, or“Symbol”, which in turn are broken up into subcategories. To take the codesfrom the above output,

'Ll' means ‘Letter, lowercase’, 'No' means“Number, other”, 'Mn' is “Mark, nonspacing”, and 'So' is “Symbol,other”. See<http://www.unicode.org/reports/tr44/#General_Category_Values> for alist of category codes.References¶

The Unicode and 8-bit string types are described in the Python library referenceat Sequence Types — str, unicode, list, tuple, bytearray, buffer, xrange.

The documentation for the

unicodedata module.The documentation for the

codecs module.Marc-André Lemburg gave a presentation at EuroPython 2002 titled “Python andUnicode”. A PDF version of his slides is available at<https://downloads.egenix.com/python/Unicode-EPC2002-Talk.pdf>, and is anexcellent overview of the design of Python’s Unicode features.

Reading and Writing Unicode Data¶

Once you’ve written some code that works with Unicode data, the next problem isinput/output. How do you get Unicode strings into your program, and how do youconvert Unicode into a form suitable for storage or transmission?

It’s possible that you may not need to do anything depending on your inputsources and output destinations; you should check whether the libraries used inyour application support Unicode natively. XML parsers often return Unicodedata, for example. Many relational databases also support Unicode-valuedcolumns and can return Unicode values from an SQL query.

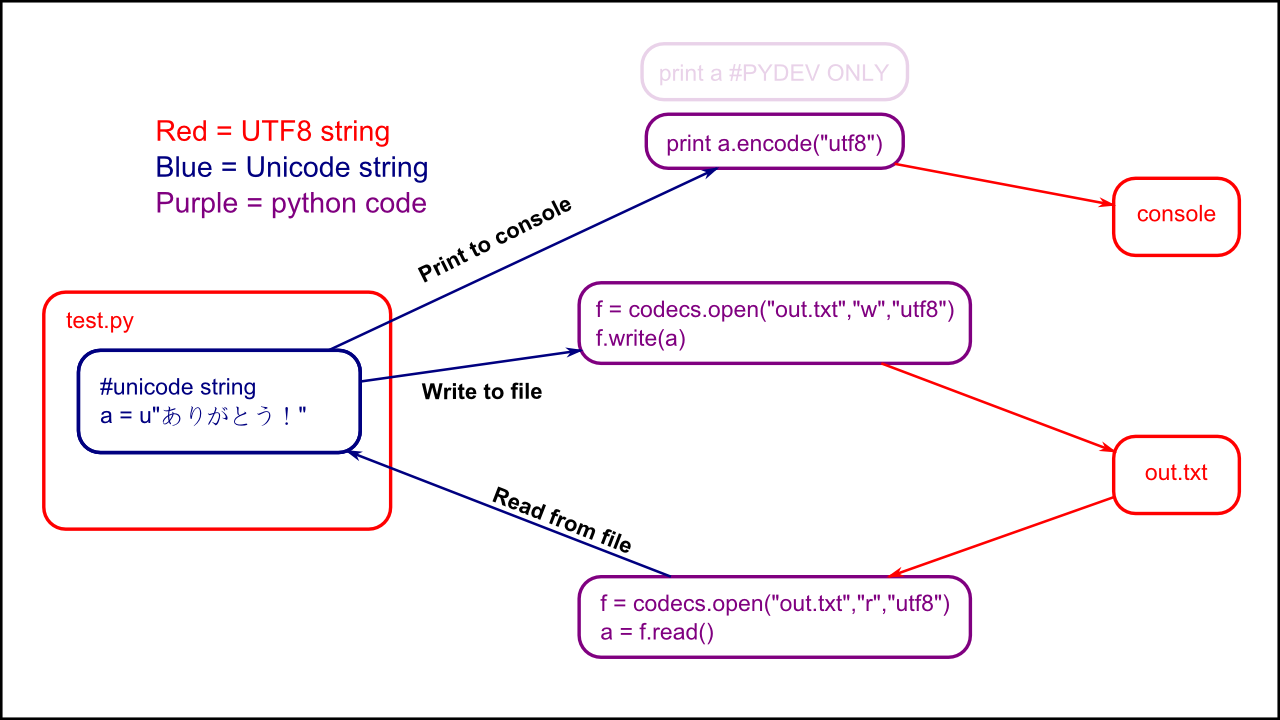

Unicode data is usually converted to a particular encoding before it getswritten to disk or sent over a socket. It’s possible to do all the workyourself: open a file, read an 8-bit string from it, and convert the string with

unicode(str,encoding). However, the manual approach is not recommended.One problem is the multi-byte nature of encodings; one Unicode character can berepresented by several bytes. If you want to read the file in arbitrary-sizedchunks (say, 1K or 4K), you need to write error-handling code to catch the casewhere only part of the bytes encoding a single Unicode character are read at theend of a chunk. One solution would be to read the entire file into memory andthen perform the decoding, but that prevents you from working with files thatare extremely large; if you need to read a 2Gb file, you need 2Gb of RAM.(More, really, since for at least a moment you’d need to have both the encodedstring and its Unicode version in memory.)

The solution would be to use the low-level decoding interface to catch the caseof partial coding sequences. The work of implementing this has already beendone for you: the

codecs module includes a version of the open()function that returns a file-like object that assumes the file’s contents are ina specified encoding and accepts Unicode parameters for methods such as.read() and .write().The function’s parameters are

open(filename,mode='rb',encoding=None,errors='strict',buffering=1). mode can be 'r', 'w', or 'a',just like the corresponding parameter to the regular built-in open()function; add a '+' to update the file. buffering is similarly parallelto the standard function’s parameter. encoding is a string giving theencoding to use; if it’s left as None, a regular Python file object thataccepts 8-bit strings is returned. Otherwise, a wrapper object is returned, anddata written to or read from the wrapper object will be converted as needed.errors specifies the action for encoding errors and can be one of the usualvalues of ‘strict’, ‘ignore’, and ‘replace’.Reading Unicode from a file is therefore simple:

It’s also possible to open files in update mode, allowing both reading andwriting:

Unicode character U+FEFF is used as a byte-order mark (BOM), and is oftenwritten as the first character of a file in order to assist with autodetectionof the file’s byte ordering. Some encodings, such as UTF-16, expect a BOM to bepresent at the start of a file; when such an encoding is used, the BOM will beautomatically written as the first character and will be silently dropped whenthe file is read. There are variants of these encodings, such as ‘utf-16-le’and ‘utf-16-be’ for little-endian and big-endian encodings, that specify oneparticular byte ordering and don’t skip the BOM.

Unicode filenames¶

Most of the operating systems in common use today support filenames that containarbitrary Unicode characters. Usually this is implemented by converting theUnicode string into some encoding that varies depending on the system. Forexample, Mac OS X uses UTF-8 while Windows uses a configurable encoding; onWindows, Python uses the name “mbcs” to refer to whatever the currentlyconfigured encoding is. On Unix systems, there will only be a filesystemencoding if you’ve set the

LANG or LC_CTYPE environment variables; ifyou haven’t, the default encoding is ASCII.The

sys.getfilesystemencoding() function returns the encoding to use onyour current system, in case you want to do the encoding manually, but there’snot much reason to bother. When opening a file for reading or writing, you canusually just provide the Unicode string as the filename, and it will beautomatically converted to the right encoding for you:Functions in the

os module such as os.stat() will also accept Unicodefilenames.os.listdir(), which returns filenames, raises an issue: should it returnthe Unicode version of filenames, or should it return 8-bit strings containingthe encoded versions? os.listdir() will do both, depending on whether youprovided the directory path as an 8-bit string or a Unicode string. If you passa Unicode string as the path, filenames will be decoded using the filesystem’sencoding and a list of Unicode strings will be returned, while passing an 8-bitpath will return the 8-bit versions of the filenames. For example, assuming thedefault filesystem encoding is UTF-8, running the following program:will produce the following output:

The first list contains UTF-8-encoded filenames, and the second list containsthe Unicode versions.

Tips for Writing Unicode-aware Programs¶

This section provides some suggestions on writing software that deals withUnicode.

The most important tip is:

Software should only work with Unicode strings internally, converting to aparticular encoding on output.

If you attempt to write processing functions that accept both Unicode and 8-bitstrings, you will find your program vulnerable to bugs wherever you combine thetwo different kinds of strings. Python’s default encoding is ASCII, so whenevera character with an ASCII value > 127 is in the input data, you’ll get a

UnicodeDecodeError because that character can’t be handled by the ASCIIencoding.It’s easy to miss such problems if you only test your software with data thatdoesn’t contain any accents; everything will seem to work, but there’s actuallya bug in your program waiting for the first user who attempts to use characters> 127. A second tip, therefore, is:

Include characters > 127 and, even better, characters > 255 in your testdata.

When using data coming from a web browser or some other untrusted source, acommon technique is to check for illegal characters in a string before using thestring in a generated command line or storing it in a database. If you’re doingthis, be careful to check the string once it’s in the form that will be used orstored; it’s possible for encodings to be used to disguise characters. This isespecially true if the input data also specifies the encoding; many encodingsleave the commonly checked-for characters alone, but Python includes someencodings such as

'base64' that modify every single character.For example, let’s say you have a content management system that takes a Unicodefilename, and you want to disallow paths with a ‘/’ character. You might writethis code:

However, if an attacker could specify the

'base64' encoding, they could pass'L2V0Yy9wYXNzd2Q=', which is the base-64 encoded form of the string'/etc/passwd', to read a system file. The above code looks for '/'characters in the encoded form and misses the dangerous character in theresulting decoded form.References¶

The PDF slides for Marc-André Lemburg’s presentation “Writing Unicode-awareApplications in Python” are available at<https://downloads.egenix.com/python/LSM2005-Developing-Unicode-aware-applications-in-Python.pdf>and discuss questions of character encodings as well as how to internationalizeand localize an application.

Revision History and Acknowledgements¶

Thanks to the following people who have noted errors or offered suggestions onthis article: Nicholas Bastin, Marius Gedminas, Kent Johnson, Ken Krugler,Marc-André Lemburg, Martin von Löwis, Chad Whitacre.

Version 1.0: posted August 5 2005.

Version 1.01: posted August 7 2005. Corrects factual and markup errors; addsseveral links.

Version 1.02: posted August 16 2005. Corrects factual errors.

Version 1.03: posted June 20 2010. Notes that Python 3.x is not covered,and that the HOWTO only covers 2.x.

A recent discussion on the python-ideas mailing list made it clear that we(i.e. the core Python developers) need to provide some clearer guidance onhow to handle text processing tasks that trigger exceptions by default inPython 3, but were previously swept under the rug by Python 2’s blitheassumption that all files are encoded in “latin-1”.

While we’ll have something in the official docs before too long, this ismy own preliminary attempt at summarising the options for processing textfiles, and the various trade-offs between them.

- Text File Processing

The obvious question to ask is what changed in Python 3 so that the commonapproaches that developers used to use for text processing in Python 2 havenow started to throw

UnicodeDecodeError and UnicodeEncodeError inPython 3.The key difference is that the default text processing behaviour in Python 3aims to detect text encoding problems as early as possible - either whenreading improperly encoded text (indicated by

UnicodeDecodeError) or whenbeing asked to write out a text sequence that cannot be correctly representedin the target encoding (indicated by UnicodeEncodeError).This contrasts with the Python 2 approach which allowed data corruption bydefault and strict correctness checks had to be requested explicitly. Thatcould certainly be convenient when the data being processed waspredominantly ASCII text, and the occasional bit of data corruption wasunlikely to be even detected, let alone cause problems, but it’s hardly asolid foundation for building robust multilingual applications (as anyonethat has ever had to track down an errant

UnicodeError in Python 2 willknow).However, Python 3 does provide a number of mechanisms for relaxing the defaultstrict checks in order to handle various text processing use cases (inparticular, use cases where “best effort” processing is acceptable, and strictcorrectness is not required). This article aims to explain some of them bylooking at cases where it would be appropriate to use them.

Note that many of the features I discuss below are available in Python 2as well, but you have to explicitly access them via the

unicode typeand the codecs module. In Python 3, they’re part of the behaviour ofthe str type and the open builtin.To process text effectively in Python 3, it’s necessary to learn at least atiny amount about Unicode and text encodings:

- Python 3 always stores text strings as sequences of Unicode code points.These are values in the range 0-0x10FFFF. They don’t always corresponddirectly to the characters you read on your screen, but that distinctiondoesn’t matter for most text manipulation tasks.

- To store text as binary data, you must specify an encoding for that text.

- The process of converting from a sequence of bytes (i.e. binary data)to a sequence of code points (i.e. text data) is decoding, while thereverse process is encoding.

- For historical reasons, the most widely used encoding is

ascii, whichcan only handle Unicode code points in the range 0-0x7F (i.e. ASCII is a7-bit encoding). - There are a wide variety of ASCII compatible encodings, which ensure thatany appearance of a valid ASCII value in the binary data refers to thecorresponding ASCII character.

- “utf-8” is becoming the preferred encoding for many applications, as it isan ASCII-compatible encoding that can encode any valid Unicode code point.

- “latin-1” is another significant ASCII-compatible encoding, as it maps bytevalues directly to the first 256 Unicode code points. (Note that Windowshas it’s own “latin-1” variant called cp1252, but, unlike the ISO“latin-1” implemented by the Python codec with that name, the Windowsspecific variant doesn’t map all 256 possible byte values)

- There are also many ASCII incompatible encodings in widespread use,particularly in Asian countries (which had to devise their own solutions beforethe rise of Unicode) and on platforms such as Windows, Java and the .NET CLR,where many APIs accept text as UTF-16 encoded data.

- The

locale.getpreferredencoding()call reports the encoding that Pythonwill use by default for most operations that require an encoding (e.g.reading in a text file without a specified encoding). This is designed toaid interoperability between Python and the host operating system, but cancause problems with interoperability between systems (if encoding issuesare not managed consistently). - The

sys.getfilesystemencoding()call reports the encoding that Pythonwill use by default for most operations that both require an encoding andinvolve textual metadata in the filesystem (e.g. determining the resultsofos.listdir()) - If you’re a native English speaker residing in an English speaking country(like me!) it’s tempting to think “but Python 2 works fine, why are youbothering me with all this Unicode malarkey?”. It’s worth trying to rememberthat we’re actually a minority on this planet and, for most people on Earth,ASCII and

latin-1can’t even handle their name, let alone any othertext they might want to write or process in their native language.

To help standardise various techniques for dealing with Unicode encoding anddecoding errors, Python includes a concept of Unicode error handlers thatare automatically invoked whenever a problem is encountered in the processof encoding or decoding text.

I’m not going to cover all of them in this article, but three are ofparticular significance:

strict: this is the default error handler that just raisesUnicodeDecodeErrorfor decoding problems andUnicodeEncodeErrorforencoding problems.surrogateescape: this is the error handler that Python uses for mostOS facing APIs to gracefully cope with encoding problems in the datasupplied by the OS. It handles decoding errors by squirreling the data awayin a little used part of the Unicode code point space (For those interestedin more detail, see PEP 383). When encoding, it translates those hiddenaway values back into the exact original byte sequence that failed todecode correctly. Just as this is useful for OS APIs, it can make it easierto gracefully handle encoding problems in other contexts.backslashreplace: this is an encoding error handler that convertscode points that can’t be represented in the target encoding to theequivalent Python string numeric escape sequence. It makes it easy toensure thatUnicodeEncodeErrorwill never be thrown, but doesn’t losemuch information while doing so losing (since we don’t want encodingproblems hiding error output, this error handler is enabled onsys.stderrby default).

One alternative that is always available is to open files in binary mode andprocess them as bytes rather than as text. This can work in many cases,especially those where the ASCII markers are embedded in genuinely arbitrarybinary data.

However, for both “text data with unknown encoding” and “text data with knownencoding, but potentially containing encoding errors”, it is oftenpreferable to get them into a form that can be handled as text strings. Inparticular, some APIs that accept both bytes and text may be very strictabout the encoding of the bytes they accept (for example, the

urllib.urlparse module accepts only pure ASCII data for processing asbytes, but will happily process text strings containing non-ASCIIcode points).This section explores a number of use cases that can arise when processingtext. Text encoding is a sufficiently complex topic that there’s no onesize fits all answer - the right answer for a given application will dependon factors like:

- how much control you have over the text encodings used

- whether avoiding program failure is more important than avoiding datacorruption or vice-versa

- how common encoding errors are expected to be, and whether they need tobe handled gracefully or can simply be rejected as invalid input

Use case: the files to be processed are in an ASCII compatible encoding,but you don’t know exactly which one. All files must be processed withouttriggering any exceptions, but some risk of data corruption is deemedacceptable (e.g. collating log files from multiple sources where somedata errors are acceptable, so long as the logs remain largely intact).

Approach: use the “latin-1” encoding to map byte values directly to thefirst 256 Unicode code points. This is the closest equivalent Python 3offers to the permissive Python 2 text handling model.

Example:

f=open(fname,encoding='latin-1')Note

While the Windows

cp1252 encoding is also sometimes referred to as“latin-1”, it doesn’t map all possible byte values, and thus needsto be used in combination with the surrogateescape error handler toensure it never throws UnicodeDecodeError. The latin-1 encodingin Python implements ISO_8859-1:1987 which maps all possible byte valuesto the first 256 Unicode code points, and thus ensures decoding errorswill never occur regardless of the configured error handler.Consequences:

- data will not be corrupted if it is simply read in, processed as ASCIItext, and written back out again.

- will never raise UnicodeDecodeError when reading data

- will still raise UnicodeEncodeError if codepoints above 0xFF (e.g. smartquotes copied from a word processing program) are added to the text stringbefore it is encoded back to bytes. To prevent such errors, use the

backslashreplaceerror handler (or one of the other error handlersthat replaces Unicode code points without a representation in the targetencoding with sequences of ASCII code points). - data corruption may occur if the source data is in an ASCII incompatibleencoding (e.g. UTF-16)

- corruption may occur if data is written back out using an encoding otherthan

latin-1 - corruption may occur if the non-ASCII elements of the string are modifieddirectly (e.g. for a variable width encoding like UTF-8 that has beendecoded as

latin-1instead, slicing the string at an arbitrary pointmay split a multi-byte character into two pieces)

Use case: the files to be processed are in an ASCII compatible encoding,but you don’t know exactly which one. All files must be processed withouttriggering any exceptions, but some Unicode related errors are acceptable inorder to reduce the risk of data corruption (e.g. collating log files frommultiple sources, but wanting more explicit notification when the collateddata is at risk of corruption due to programming errors that violate theassumption of writing the data back out only in its original encoding)

Approach: use the

ascii encoding with the surrogateescape errorhandler.Example:

f=open(fname,encoding='ascii',errors='surrogateescape')Consequences:

- data will not be corrupted if it is simply read in, processed as ASCIItext, and written back out again.

- will never raise UnicodeDecodeError when reading data

- will still raise UnicodeEncodeError if codepoints above 0xFF (e.g. smartquotes copied from a word processing program) are added to the text stringbefore it is encoded back to bytes. To prevent such errors, use the

backslashreplaceerror handler (or one of the other error handlersthat replaces Unicode code points without a representation in the targetencoding with sequences of ASCII code points). - will also raise UnicodeEncodeError if an attempt is made to encode a textstring containing escaped bytes values without enabling the

surrogateescapeerror handler (or an even more tolerant handler likebackslashreplace). - some Unicode processing libraries that ensure a code point sequence isvalid text may complain about the escaping mechanism used (I’m not goingto explain what it means here, but the phrase “lone surrogate” is a hintthat something along those lines may be happening - the fact that“surrogate” also appears in the name of the error handler is not acoincidence).

- data corruption may still occur if the source data is in an ASCIIincompatible encoding (e.g. UTF-16)

- data corruption is also still possible if the escaped portions of thestring are modified directly

Use case: the files to be processed are in a consistent encoding, theencoding can be determined from the OS details and locale settings and itis acceptable to refuse to process files that are not properly encoded.

Approach: simply open the file in text mode. This use case describes thedefault behaviour in Python 3.

Example:

f=open(fname)Consequences:

UnicodeDecodeErrormay be thrown when reading such files (if the data is notactually in the encoding returned bylocale.getpreferredencoding())UnicodeEncodeErrormay be thrown when writing such files (if attempting towrite out code points which have no representation in the target encoding).- the

surrogateescapeerror handler can be used to be more tolerant ofencoding errors if it is necessary to make a best effort attempt to processfiles that contain such errors instead of rejecting them outright as invalidinput.

Use case: the files to be processed are nominally in a consistentencoding, you know the exact encoding in advance and it is acceptable torefuse to process files that are not properly encoded. This is becoming moreand more common, especially with many text file formats beginning tostandardise on UTF-8 as the preferred text encoding.

Approach: open the file in text mode with the appropriate encoding

Example:

f=open(fname,encoding='utf-8')Consequences:

UnicodeDecodeErrormay be thrown when reading such files (if the data is notactually in the specified encoding)UnicodeEncodeErrormay be thrown when writing such files (if attempting towrite out code points which have no representation in the target encoding).- the

surrogateescapeerror handler can be used to be more tolerant ofencoding errors if it is necessary to make a best effort attempt to processfiles that contain such errors instead of rejecting them outright as invalidinput.

Use case: the files to be processed include markers that specify thenominal encoding (with a default encoding assumed if no marker is present)and it is acceptable to refuse to process files that are not properly encoded.

Approach: first open the file in binary mode to look for the encodingmarker, then reopen in text mode with the identified encoding.

Example:

f=tokenize.open(fname) uses PEP 263 encoding markers todetect the encoding of Python source files (defaulting to UTF-8 if noencoding marker is detected)Consequences:

- can handle files in different encodings

- may still raise UnicodeDecodeError if the encoding marker is incorrect

- must ensure marker is set correctly when writing such files

- even if it is not the default encoding, individual files can still beset to use UTF-8 as the encoding in order to support encoding almostall Unicode code points

- the

surrogateescapeerror handler can be used to be more tolerant ofencoding errors if it is necessary to make a best effort attempt to processfiles that contain such errors instead of rejecting them outright as invalidinput.